Design and Evaluation of an AI System for Multi-Dimensional Online Harm Detection

Wiam Kitar (Northwest Missouri State University), Chantal Roux (Northwest Missouri State University), Yousra Tabite (Northwest Missouri State University), Dr. Ajay Bandi (Northwest Missouri State University)

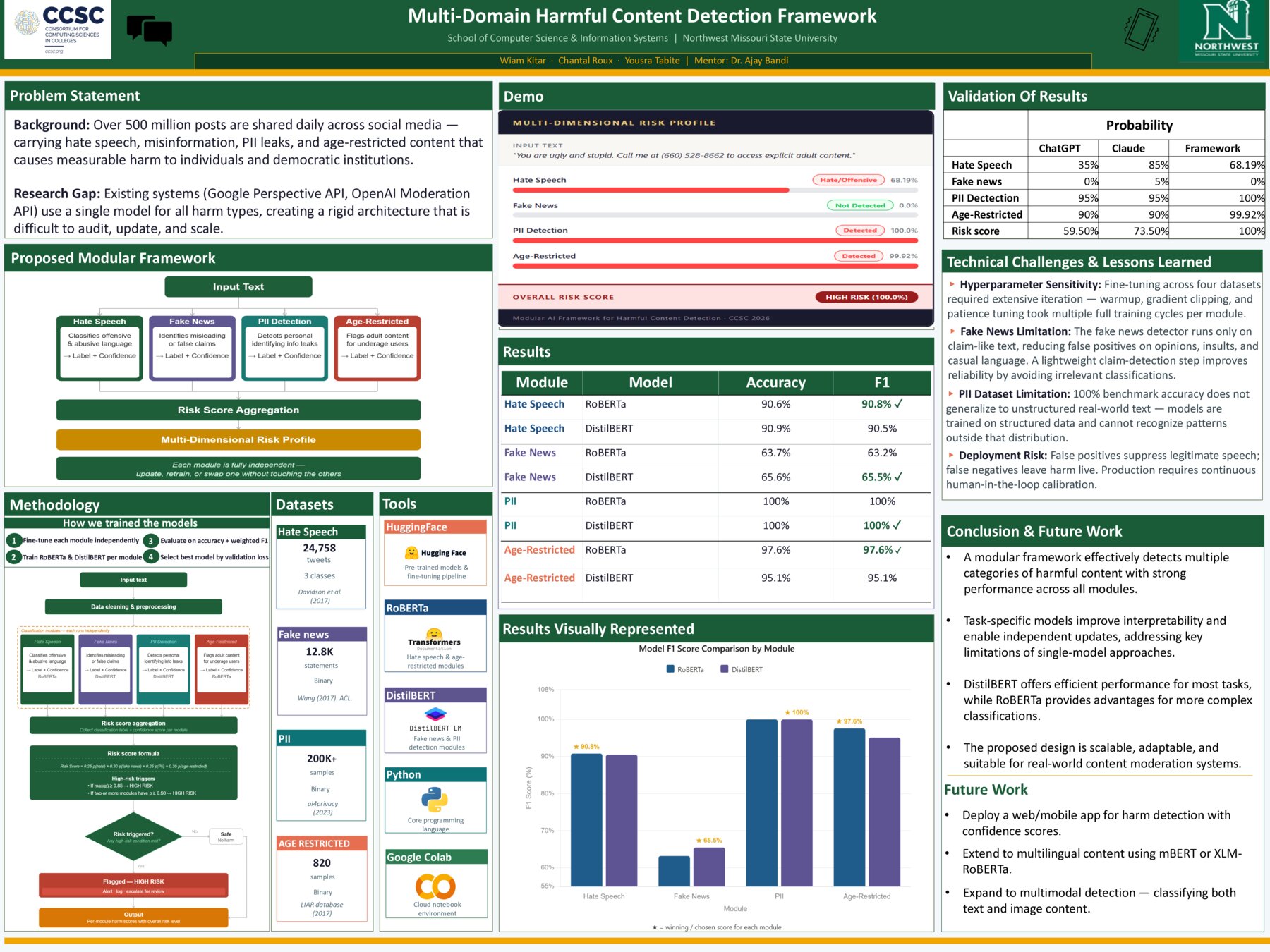

The growth of social media platforms has increased the volume and variety of harmful content online, including hate speech, fake news, personal information leaks, and age-restricted content. Many current moderation systems rely on a single artificial intelligence model to detect multiple categories of harmful content, which can limit interpretability and scalability. The objective of this project is to design a unified framework capable of identifying and representing multiple forms of online harm within one system. This project proposes a modular artificial intelligence framework composed of detection modules, each focused on a specific harm category: hate speech, fake news, personal information leaks, and age-restricted content. The outputs are standardized and combined into a multi-dimensional risk profile to provide a clearer and more structured assessment of content. To develop and test the system, public datasets are analyzed to understand their labels and coverage, and neural network models such as BERT and RoBERTa will be explored and implemented in a GPU-supported environment. The expected results include competitive classification accuracy for individual harm categories and improved interpretability compared to single-model moderation systems. This project offers a clear and scalable framework for better content moderation. It is significant because it supports more organized and interpretable harm detection and provides a foundation for future work in refining model selection and improving overall system performance.